University of Toronto |Faculty of Applied Science and Engineering |webmaster

All contents copyright © Autonomous Systems and Biomechatronics Lab.

Social and Personal Robots

Our research in this area focuses on the development of human-like social robots with the social functionalities and behavioral norms required to engage humans in natural assistive interactions such as providing: 1) reminders, 2) health monitoring, and 3) social and cognitive training interventions. In order for these robots to successfully partake in social HRI, they must be able to interpret human social cues. This can be achieved by perceiving and interpreting the natural communication modes of a human, such as speech, paralanguage (intonation, pitch, and volume of voice), body language and facial expressions.

Interactive robots developed for social human-robot interaction (HRI) scenarios need to be socially intelligent in order to engage in natural bi-directional communication with humans. Social intelligence allows a robot to share information with, relate to, and interact with people in human-centered environments. Robot social intelligence can result in more effective and engaging interactions and hence, better acceptance of a robot by the intended users.

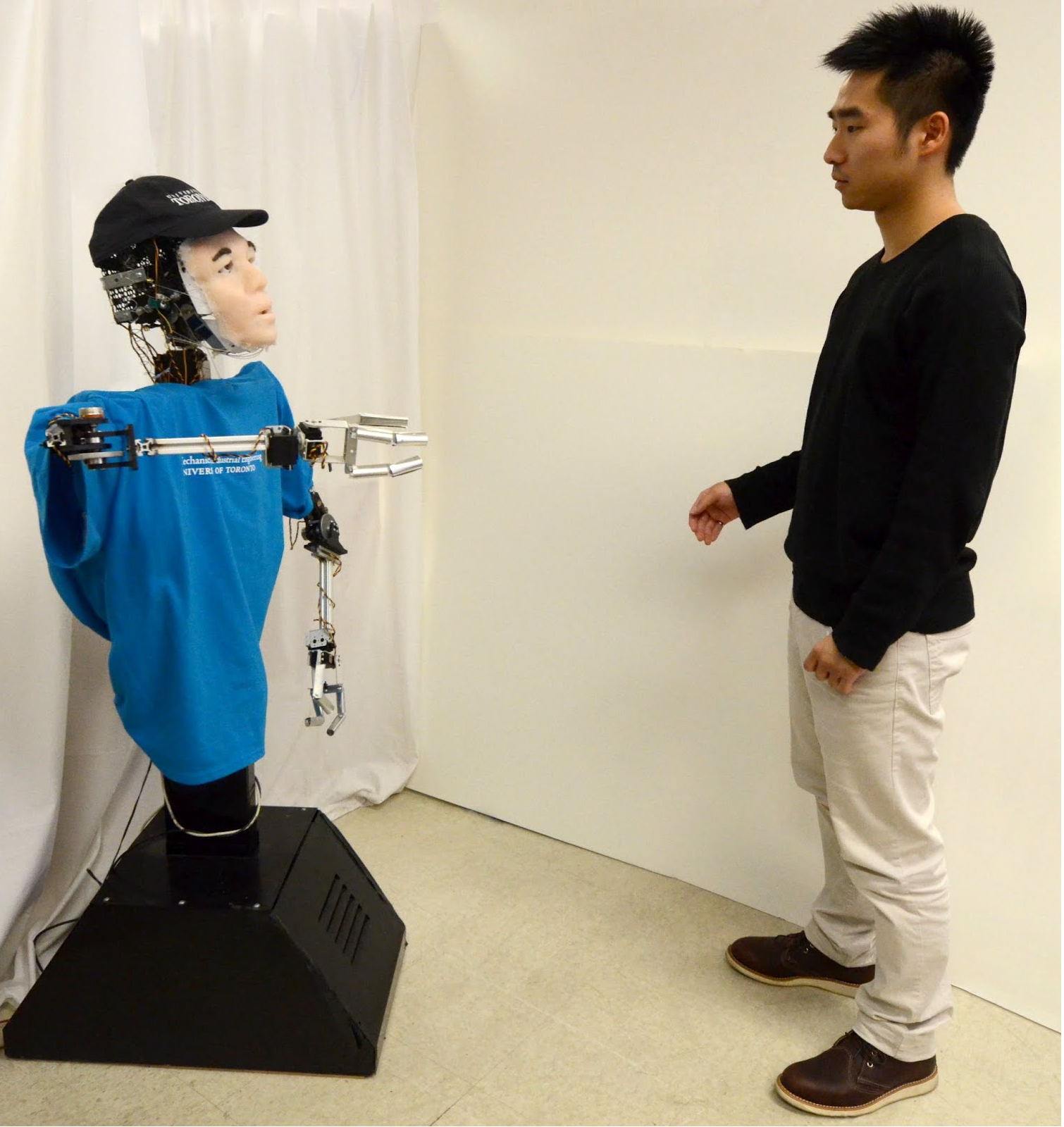

Brian 2.1 during a one-on-one interaction.

We have been developing automated real-time affect (emotion, mood and attitude) detection and classification systems to detect and classify natural modes of human communication, including:

1) body language,

2) paralanguage, and

3) facial expressions

for our social robots to interpret in order to effectively engage a person in a desired activity by displaying appropriate emotion-based behaviours.

Videos:

Example Brian 2.1 behaviours during accessibility-aware Robot Tutor and Robot Restaurant Finder interactions:

Shane Saunderson presenting on the importance of considering communication directness and robot familiarity in HRI:

Funding Sources: Natural Sciences and Engineering Research Council of Canada (NSERC), the Canada Research Chairs (CRC) Program, AGE-WELL (which is supported by the Government of Canada through the Networks of Centres of Excellence (NCE)), Canada Foundation for Innovation (CFI) and Ontario Research Fund (ORF).